I Built Something A Few Weeks Ago

My mother-in-law needed a clinical trial. My wife and I, both doctors, had a hard time finding the right one. I decided to try to solve it.

February 19, 2026

I’m genuinely bad at directions. Embarrassingly bad.

But it hasn’t mattered in years. I land in a new city, and within minutes I know exactly how to get anywhere.

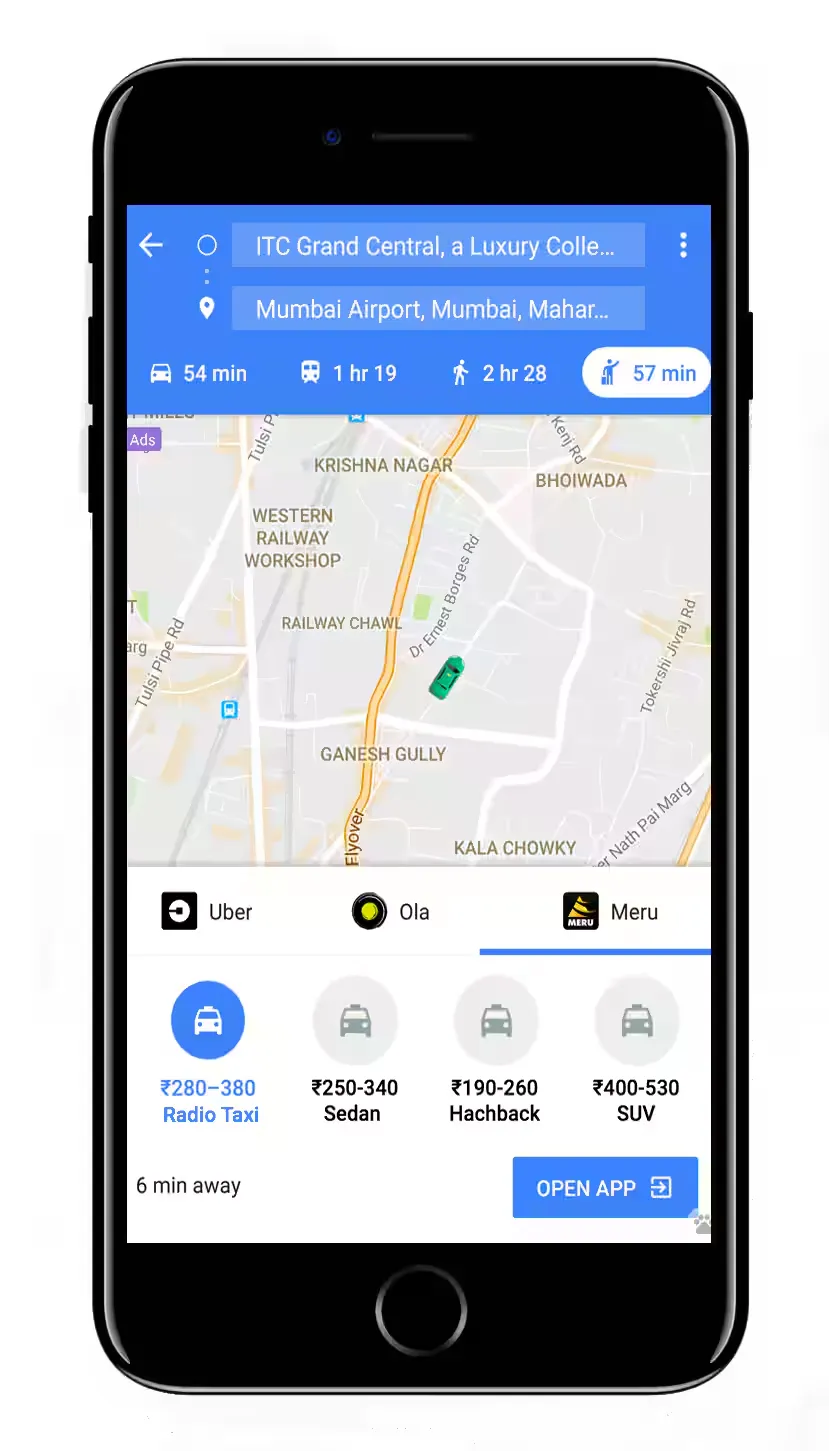

I just open Google Maps.

I compare taxi fares against the metro. I check if there’s a bus that gets me there in the same time for a third of the price. I walk when it makes sense. I have options, and I can evaluate all of them instantly.

15 years ago, none of this was possible. You got in a taxi and trusted the driver. The good drivers had spent years memorizing routes, shortcuts, which streets to avoid at rush hour. That knowledge was rare and valuable. Your experience depended entirely on which driver you got.

But Google Maps didn’t appear out of nowhere. GPS satellites had to launch. Coordinates had to be standardized. Mobile phones happened. Then mobile internet. Then smartphones. Only then could someone build a great navigation app on top of all that infrastructure.

Healthcare today is still like getting into a taxi in 1995. You don’t pick the route. You don’t compare options. You don’t even know what the ride will cost until it’s over. You just get in and trust the driver.

But that’s starting to change.

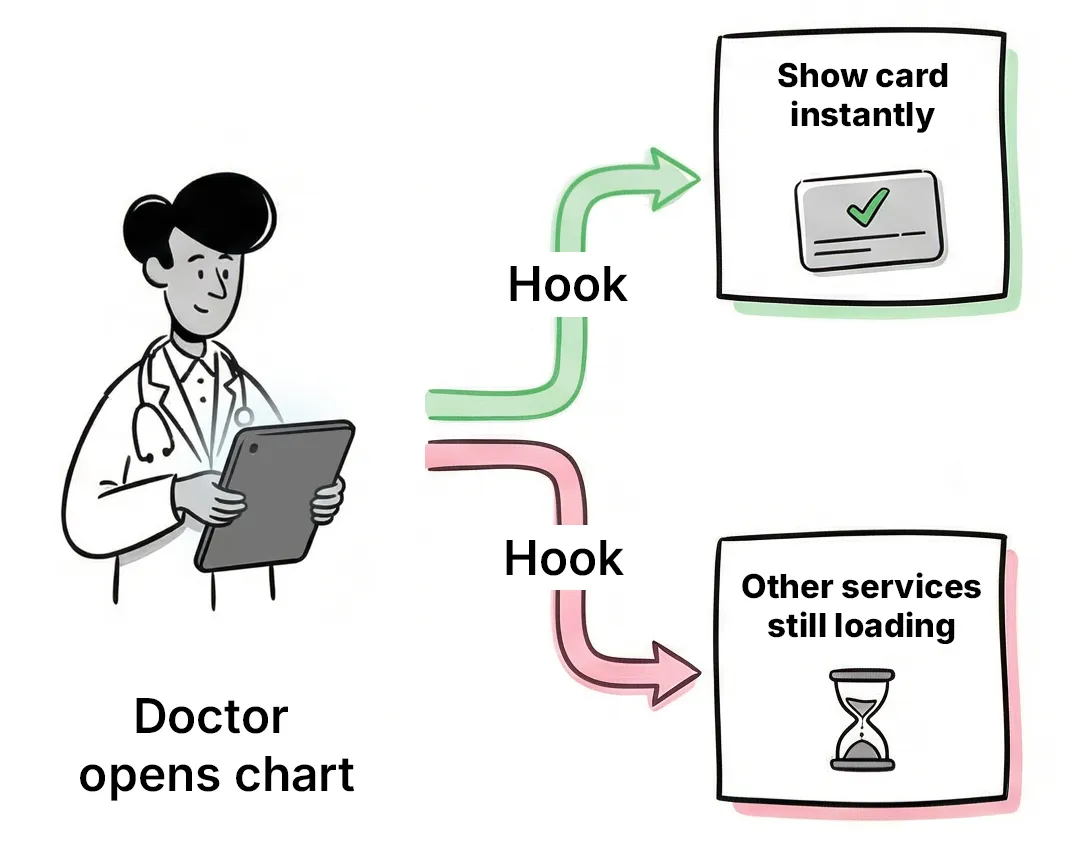

When most people talk about technology in healthcare, they mean giving clinicians better tools. Clinical decision support. AI that suggests diagnoses. Smarter alerts in the EHR. Last week I wrote about CDS Hooks and how they’re becoming a gateway into the practitioner’s workflow. This is the equivalent of putting GPS in the taxi driver’s car.

And it’s happening. UpToDate is already used 1.6 million times a day across 50,000 hospitals. Two-thirds of US physicians now use AI in practice. Tools like OpenEvidence handle 15 million consultations a month.

Nurses will do more primary care. Primary care doctors will do more specialist work.

This is important work. But to me, it’s not the interesting part.

It’s chauffeured cars getting GPS. You’re still in the back seat. Someone else is still driving.

Last year I met e-Patient Dave in Minneapolis. He’s been advocating for patient empowerment for over a decade. One thing he said stuck with me: when a patient presents a doctor with a better diagnosis than theirs, the doctor’s reaction isn’t gratitude. It’s an identity crisis. “If the patient knows more than me, who am I?”

In med school, I kept a document of just the most commonly asked exam questions. It became wildly popular with my classmates. Meanwhile, some friends who actually tried to study one subject deeply sometimes failed their exams. The system literally rewards breadth over depth.

Specialization doesn’t fix this. You’re still trying to know something about everything in your field. It’s only at the super-speciality or sub-speciality level, after 10+ years of training, that a doctor finally gets to know everything about one thing.

A patient with a specific condition has one thing to study. Theirs. With enough motivation, internet access, and now AI, they can surpass most non-super-specialists in weeks. Think about how much time you spend researching your next phone or car. Patients facing a serious diagnosis have far more at stake and far more reason to go deep.

Giving clinicians better tools is like putting GPS in the taxi driver’s car. But Google Maps didn’t just help drivers. It gave passengers the ability to see the route, compare options, check prices, and choose for themselves. That’s the part nobody’s talking about in healthcare.

For patients to navigate their own care, they need 3 things: their health data, pricing information, and something smart enough to help them make sense of it all. All three are falling into place faster than most people realize.

I wrote recently about PHRs and how information blocking rules are forcing health systems to expose patient data through standard APIs. Pulling your records from any major EHR is already possible today. That’s the health data layer.

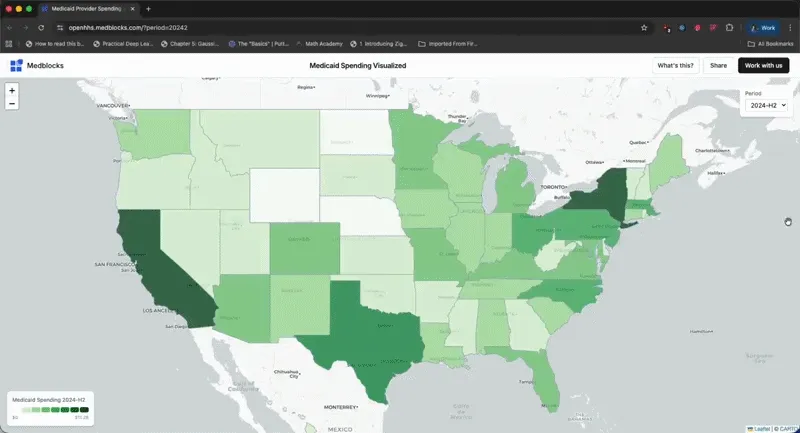

Then there’s pricing. CMS now requires every hospital to publicly disclose pricing for all services in machine-readable files. Starting April 2026, they’ll have to publish actual payment amounts, not estimates. On Feb 8, HHS released 227 million Medicare claims records from 2018-2024. Our CTO Poornachandra linked this data with NPI and CPT codes and built a free explorer on our site where you can browse a map of the US down to each zip code and see what Medicare actually paid any provider for any procedure.

And then there’s AI. This one’s obvious. People are already sharing health concerns with ChatGPT. Consumer AI is good enough to reason about medical information today. What’s been lacking is the data to feed it.

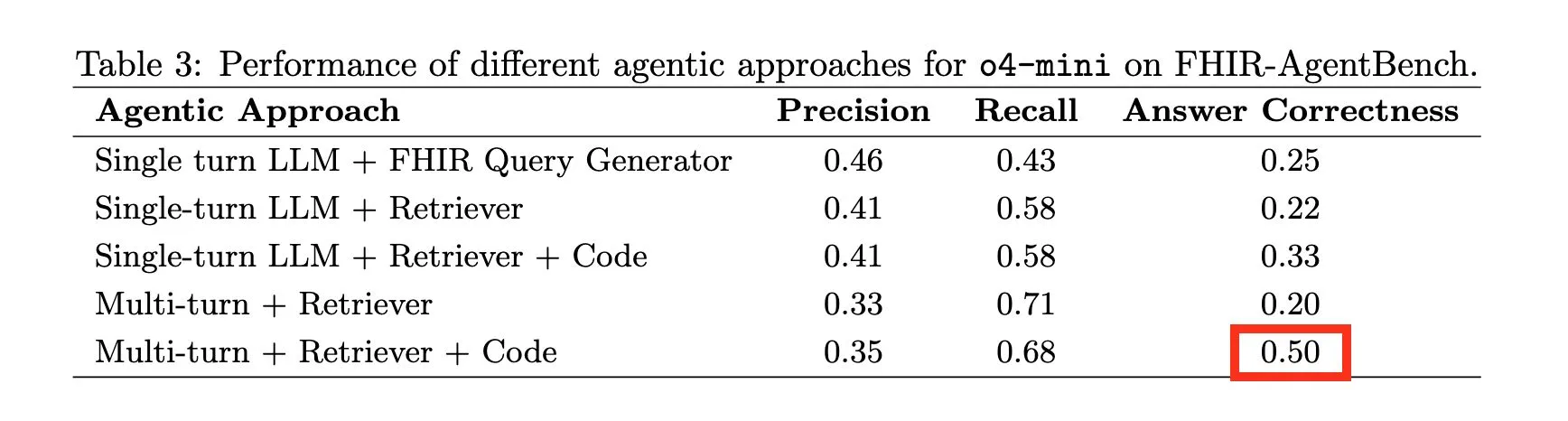

But it’s not like the data is ready out of the box either. The pricing transparency machine-readable files are so massive and duplicated that making sense of them requires big data analysis techniques. Patient data pulled through FHIR APIs is structured in ways that even the latest models struggle with. I wrote about this in why AI agents fail on EHR data: AI agents querying real EHR data perform horribly (50% accuracy), even on straightforward questions. There’s still a lot of work to be done.

And that’s the gap. The GPS satellites have launched. We have a standard coordinate system. We have algorithms smart enough to make sense of it all. But nobody’s built Google Maps for healthcare yet. That’s the opportunity.

If you’re building in this space, or thinking about it, I’d love to talk. Reply to this email or book a call with me.

My mother-in-law needed a clinical trial. My wife and I, both doctors, had a hard time finding the right one. I decided to try to solve it.

I make the case that SQL could be the solution to simplifying the layers of complexity in health data architecture. This is a written version of my talk at EHRCON 25.

CDS Hooks are more than alerts: they’re real-time EHR webhooks that can surface a card and launch a SMART on FHIR app, letting you run workflows outside the EHR. The key is relevance + low latency, and rising adoption.

No comments yet. Be the first to comment!